I designed and executed an intercept survey to measure user satisfaction with a Mendix product meant to enable app management.

Mendix offers a low-code application (app) development platform to enterprises. Their core offering is their integrated development environment (IDE), but my research focused on user experiences in the online portal facilitating the IDE.

Stakeholders in my unit were interested in implementing happiness tracking - in line with Google's HaTS method - to quantify user attitudes about products in our unit. This was part of a larger effort to more consistently evaluate Mendix products.

This study would therefore test for user happiness (satisfaction, specifically) with one Mendix product, meant for tracking app-building tasks. Additionally, this evaluation would also serve as a repeatable template to execute happiness tracking for this and other products over time.

The product in question was relatively new (less than a year old), so we primarily wanted to know whether users were satisfied with it, and what we could improve about their experience while using it. Keeping in mind the larger goal to make this study easily repeatable, including by non-researchers, I aimed for simplicity with one measure rather than several.

An additional challenge with this study was to make it comparable with a study done about six months prior to test users' attitudes about the beta version of the product. The prior study was done using an internal Mendix survey tool (chosen at the time to serve as a test for that tool simultaneously), and was conducted by product owner and developer colleagues.

Overall, the goal for this project was to determine opportunities to improve the new product. Making it repeatable was an additional factor I accounted for in its design.

Research goal

I ran an intercept survey for two weeks to collect attitudinal data from users while they interacted with the product. As a result, biases related to recall were reduced because remembering a past experience was not a factor in this method. The survey was kept short to encourage more people to complete the survey:

I targeted users whose first login to the product was more than 10 days prior, and who had not yet seen this survey - the same user group as the prior survey.

Tools

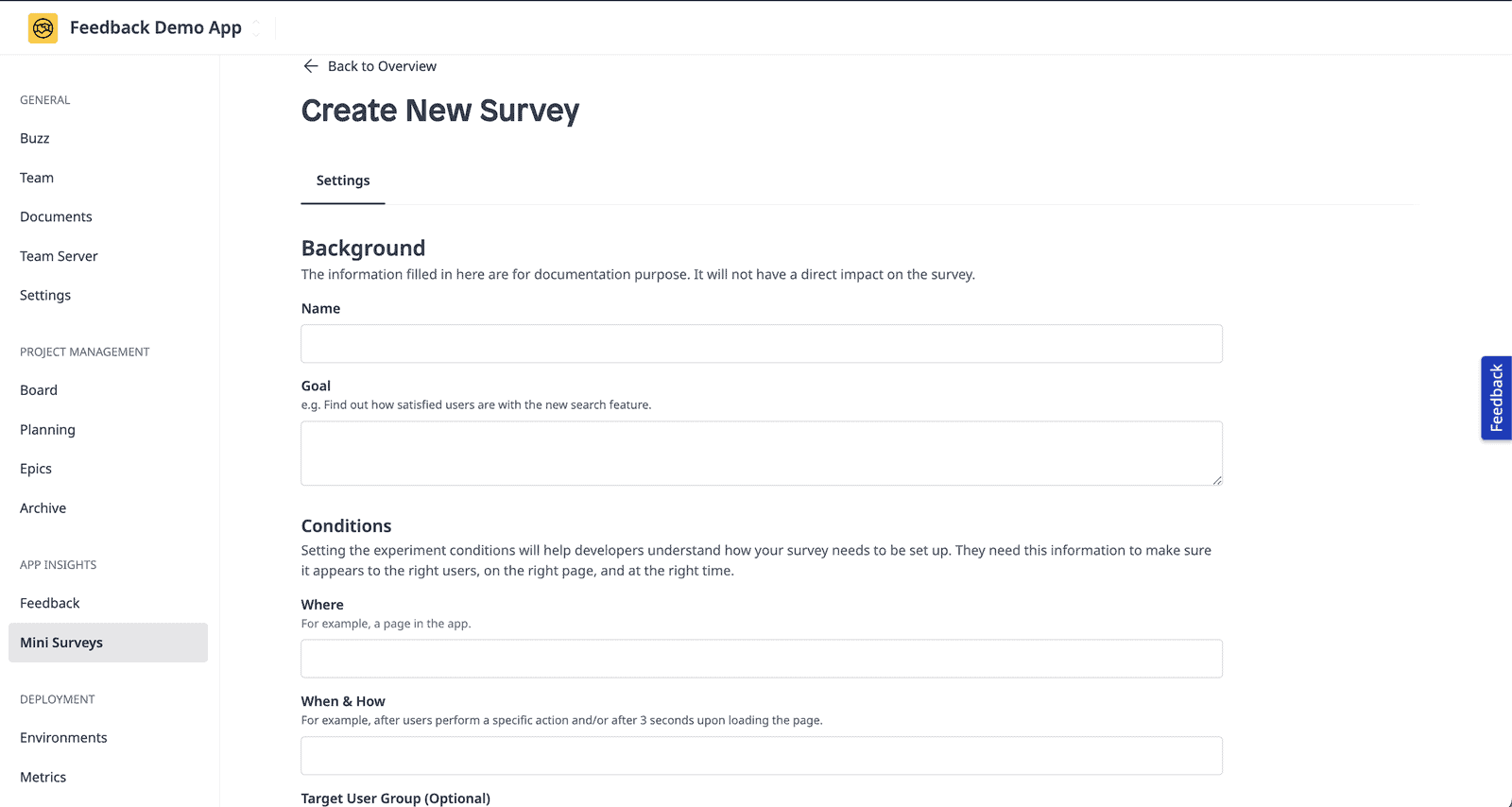

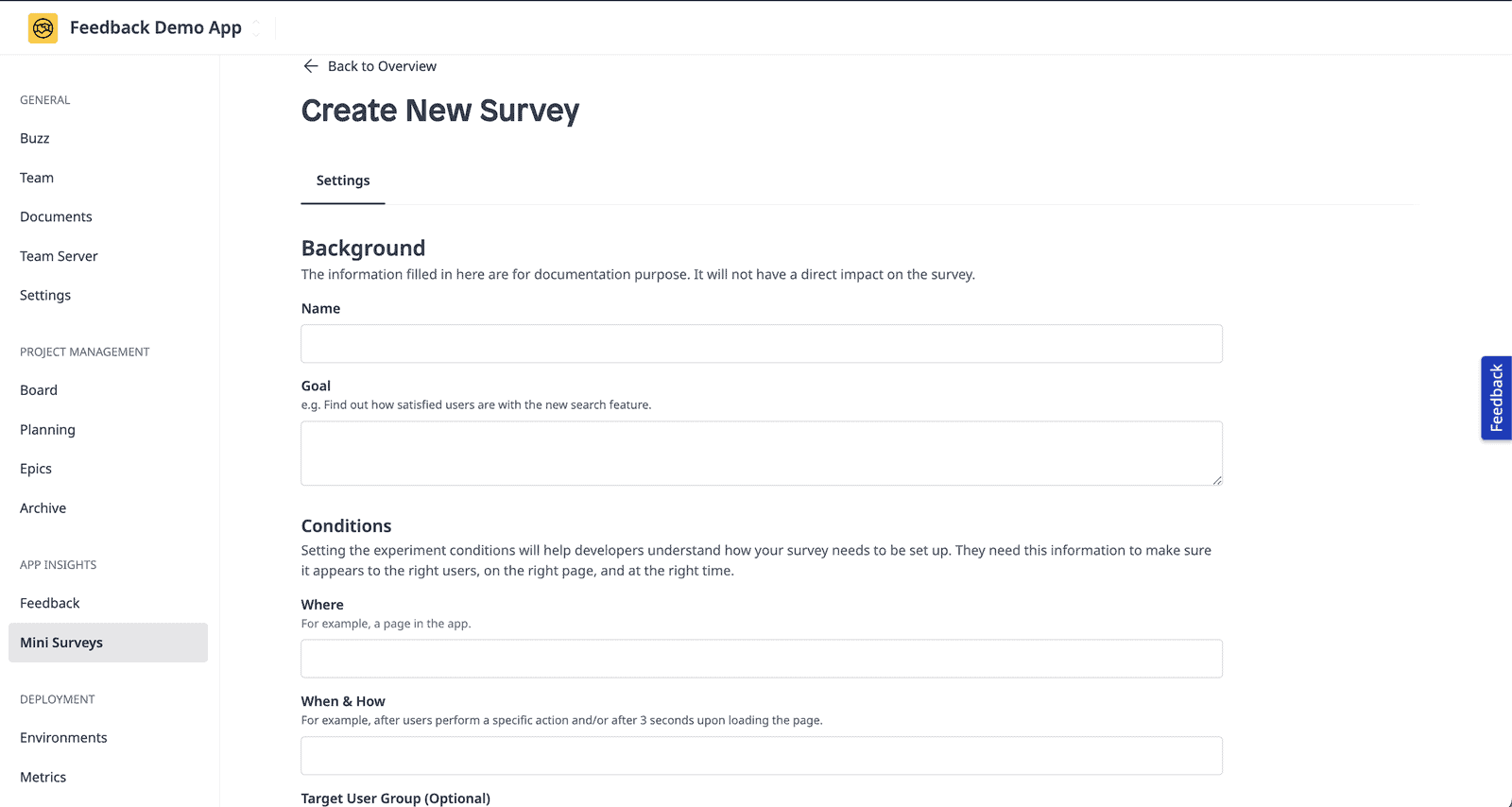

I used the same Mendix survey tool as used in the prior study so that I could more easily compare results, without needing to account for additional bias caused by the look and feel of different survey tools.

I used MS Excel for quantitative data analysis, and Dovetail for qualitative analysis.

Overall, results showed that 78% of survey participants were satisfied with the product (with a 5% completion rate, at a 95% confidence level and 8% margin of error). Open text responses in the survey pointed to users’ desire for more efficiency and organization within the product.

I concluded that overall, most users were likely to be satisfied with the product, and that these perceptions were consistent with previous perceptions of the same product.

The product team had a list of actionable next steps for improving the tool from the qualitative responses. They also had a baseline level of satisfaction (78% satisfied) to compare to future tests as they continue releasing features, and a repeatable study design to periodically measure user satisfaction over time.